Developer productivity, adoption & challenges with AI generated code

The growing popularity of vibe coding platforms has led to more LLM usage for code creation, potentially to accelerate app development. The start of 2025 saw a boom in vibe coding platforms, like Loveable, Vercel v0, Bolt, Replit, Kiro, Base44, etc., which simplified developer processes, enabled web app creation, and promised comprehensive app development. However, the quality and consistency of the code generated is highly debated, leading to roadblocks for developer adoption.

The hype!

- Anyone can become a programmer

- End-to-end app development using simple prompts

- Super cheap alternative to hiring and building dev teams

Anyone can become a programmer

Today, LLMs have evolved to accurately generate programming language syntax, best practices, and well-defined code solutions. Yes, with a well-guided problem statement and appropriate application context, they understand known trends in app architecture and produce meaningful framework code.

Vibe coding platforms produce a lot of code, which is good for bootstrapping an app prototype. But, as you start to iterate and try to make things work, it feels like you are making one step forward and two steps backward (quoted as ‘two steps back pattern’ in the article The 70% problem: Hard truths about AI-assisted coding by Addy Osmani). One needs to be a programmer to review, debug and validate the code generated by these AI code gen tools.

- Are you sure the code uses best practices for authorizing right access?

- Is the password masked or encrypted in transit?

- Is there an auth token for authentication?

- Are the inputs sanitized before the system stores them in the database?

- Are you sure there is no business logic in the client-side javascript code?

Not all working code is secure and reliable. To validate and accept code suggestions, you need to be a real programmer and that’s the catch!

Complete app development using simple prompts

LLM context window sizes are increasing, evolving to solve large problems and generate considerable portions of apps with layers of complex framework related artifacts, code and dependent libraries. Vibe coding platforms are leveraging these recent LLM improvements to generate entire app scaffolding with business logic to create web apps, based on frameworks like React, Next.js, Node.js, etc.

Vibe coding platforms index and store portions of codebase, so that the vibe coder can express ‘intent’ in plain text (English for now) and the underlying system retrieves and submits these code snippets as context to the LLM for feature generation. This is a very important process and a lot of code and prompt text gets transmitted back and forth between the LLM and the code editor.

Tokens = Syntactical strings ~ (code + prompt text + knowledge base [code examples, tools]) LLMs consume a lot of tokens to generate the code that you desire.

In reality, only simple features gets built with simple prompts and anything complex will need more context and have to go through several iterations. Even after these iterations, chances are high that one needs to end up debugging the code, by askingthe LLM to explain and identify what’s missing!

Super cheap alternative to hiring and building large dev teams

Any complex application development requires a lot of planning, documenting, decisions made regarding architecture, technology stack and developer skillset. LLMs today have matured and are good at automating development tasks such as:

- Bootstrapping boiler plate code

- Documenting code snippets

- Identifying architectural flows

- Enforcing coding best practices

- Generating well-defined code solutions

- Identifying bugs

- Generating test cases etc.

However, a team of professionals are needed with in-depth understanding of organization’s needs, domain expertise, ability to understand legacy architectures and appropriate experience to make the right technology and architectural choices. LLMs can only handle automation of certain development tasks only after a strong foundation is laid by experienced developers. Hence, it is not yet viable to build core applications and systems with a bunch of junior developers or non-tech folks assisted by LLMs.

App generation with LLMs today is token frenzy, millions and billions of tokens are needed to build anything practical and it is a very expensive affair at scale! The budgets for large teams are unchecked due to the unpredictable costs and exponential increase as the size of the codebase or the number of developers go up. For a team size of 10-25 developers, monthly AI coding tools alone could cost an additional $75k more per month (as per this article ‘ What a CTO must budget for AI coding tools‘). In reality, both the developer skillset needed and the costs are not dramatically reduced by using AI.

The implications of AI code generation on developer productivity

The unpredictability brought by vibe coding platforms makes it difficult for big organizations and large development teams to adopt AI with the objective of reducing costs or accelerating time to market. However, LLM coding assistance tools like Cursor, Github copilot, Claude Code etc. are well adopted by experienced developers, where they control and govern the output generated by these tools before it is pushed to production.

Coding assistance tools differ from vibe coding platforms in the following aspects, they are:

- Framework agnostic (i.e., do not understand web, mobile, micro services etc.)

- Programming language or syntax aware (Typescript, Java, Python etc.)

- Used for code suggestions, refactoring, identifying bugs etc.

- Rely on developers to define architecture and best practices (Cursor rules etc.)

- Learns from existing code architecture, naming conventions etc.

- Suitable for both brown-field & green-field app development

However, the following are some of the challenges faced by developers who have adopted code assistance tools:

- AI hallucinations & non-deterministic output makes developers spend more time debugging

- AI generated code is hard to refine through iterations

- As the codebase grows, AI makes incomplete suggestions and truncated output

- False sense of security and disregard for performance

1. Developers spend more time debugging

One of the challenges with AI is deterministic output and developers are using several mitigation strategies with prompts, context refinement with appropriate code examples, RAG (Retrieval Augmented Generation) based frameworks and guardrails to make the LLM generate consistent output. While some of the development teams who are on a higher maturity curve for AI adoption have figured out these approaches to improve the quality of AI generated code, it is not practical for everyone to adopt and succeed with similar approaches.

A recent report published by harness.io ( Beyond CodeGen: The role of AI in the SDLC), states that 67% of the developers spend more time debugging AI generated code. As this generated code could include outdated dependencies and insecure coding patterns that requires developers to spend more time identifying these problems. While there is initial acceleration, the time it takes to identify and address such problems in AI generated code is a serious setback to developer productivity.

2. Hard to refine AI generated code through iterations

Building a typical code solution in traditional coding approach starts with creating an initial working prototype version and then developers rewrite and refactor this version to adhere to architecture best practices of the organization, security needs, readability and maintainability for upgrades. With AI code generators, developers have a slightly better and faster start, but inevitably they need to iterate with prompts to make it accurate.

AI generates different output in every iterative step making it harder for developers to keep track of changes. Any customizations made previously gets overwritten during iterations, leading to lack of control over code generation. As the feature development progresses and after a few prompt iterations, previously working capabilities could start to fail leading to developer’s frustration and a lot of rework.

3. Inaccurate and incomplete code suggestions

As the size of the codebase increases, the code suggestions are inconsistent for developers to accept. This is largely attributed to limited LLM context window available and developer’s ability to optimize context with approaches leveraging code indexing, other RAG techniques, MCP Servers etc. More compute is needed as the context size increases and LLMs have usage limits and restrictions, resulting in improper output or increased response times.

Today’s LLM architectures, context window and caching techniques are not suitable for large codebases. While MCP (Model Context Protocol) addressed the ability for LLM to retrieve additional context to accurately produce code, it has also increased the complexity around developer tooling.

4. False sense of security and disregard for performance

“A human sees a suspicious URL; an AI sees valid syntax. And that semantic gap becomes a security gap”, by Bruce Schneier - a renowned security expert.

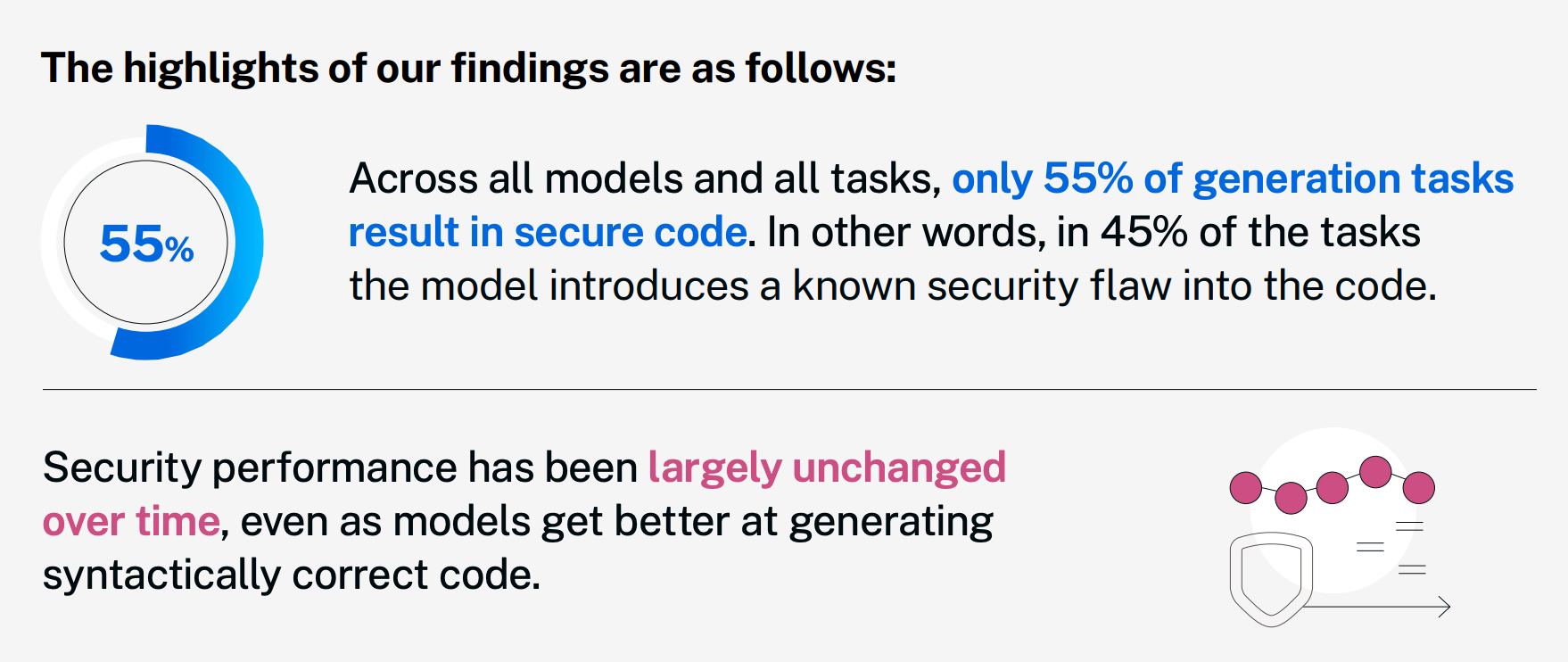

Veracode Gen AI code security report states 45% of the code generated by LLMs have known security flaws, which are identified during vulnerability assessment checks. This is because of the training data on which LLMs are trained i.e. publicly available source repositories and possibly containing vulnerabilities. Another hypothesis is most of the secure implementations and better training examples are not in public repositories and RLHF method for training the models with all possible secure scenarios may not be feasible.

Generating performant code requires deeper understanding and systems-level thinking, which requires a lot more compute. LLMs are as good as their training scenarios and the chances that they are trained on complex use cases and a widespectrum of niche scenarios is unknown. AI report from harness.io hints 52% of the time performance problems are reported in AI generated code.

Conclusion, What’s the road ahead?

AI code gen platforms, AI model providers, tech community and investors are all very bullish about the AI coder dream, but the reality is far from true. While the advancements in LLM technology and the quality of AI generated code is constantly improving over time and becoming adaptable, a human-first approach is needed to build reliable app solutions today.

Developers have to be more cautious with AI generated code and employ more checks and balances in their development environment, such as:

- Additional verification steps for code consistency & reliability (human-first)

- Define well-defined rules for architecture, best practices, code samples, etc.

- Security vulnerability assessment checks to identify issues very early during development

- Automated test cases with complete coverage, to ensure AI generated code doesn’t impact existing functionality

AI code generation platforms need to be more than just glorified prompt wrappers with smart developer interfaces, they need to tackle real challenges in buildingapplication solutions in terms of security, reusability, customizability and scalability. These platforms should focus on reducing the skillset needed to work with AI generated code and enable a human-first approach for developers to stay in control and succeed.

The future of app development is going to be very exciting with mature AI code generation solving skill reduction, time to market and building complex use cases. Development teams will be able to lean towards AI coding platforms to solve technology debt, time taken to address security vulnerabilities and building scalable enterprise-grade solutions with more confidence.